Unleash the power of AI writing with our free tools

Open source AI prompts & shortcuts designed by the community, capable of multi-prompt chains & built to use OpenAI's GPT technology.

Create your own personal prompt library and combine the power of shortcuts with our AI enhanced text editor.

Try a Shortcut - It's Free!

Key Features

Bring Your Own Key

Use your own API key from OpenAI. Extremely cheap and only pay for what you use.

Write 1 Million Words For $5ChatGPT on Steroids

Built to use OpenAI's GPT technology, Lyla also uses her own AI algorithms to enhance results .

10x You Productivity

Let us handle the complex prompt engineering so you can focus on getting things done.

Shortcuts LibraryFuture Proof Yourself

AI is changing everything. By offering popular new shortcuts everyday, Lyla helps you stay ahead of the curve.

Start Writing NowTrusted by

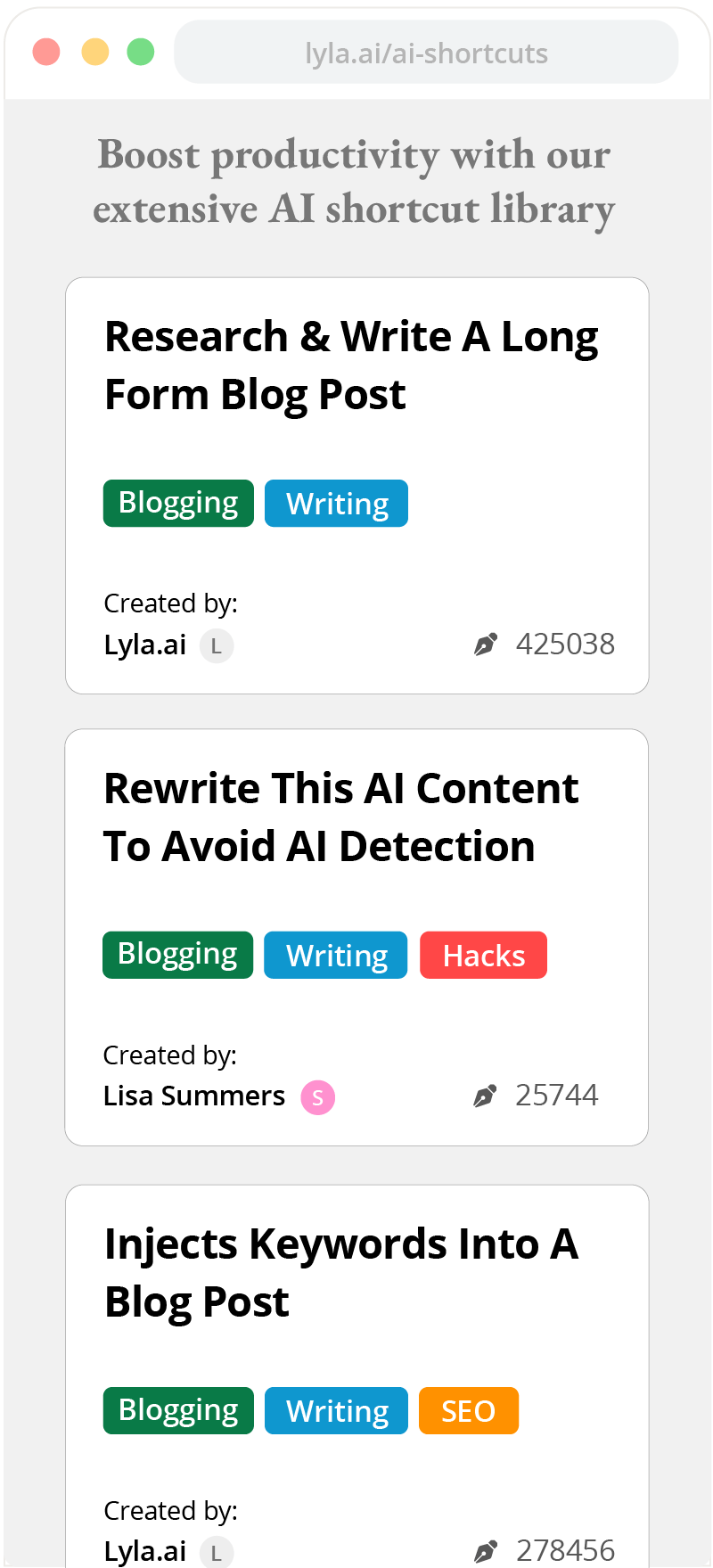

Popular Shortcuts

Blogging

- Write a well-research blog post about any subject in a writing style of my choosing

- Improve the paragraph or change its tone

- Help me analyze and end my writer's block

- Create a thorough blog outline on a subject I choose

- Research a topic and provide detailed notes of your findings

- Generate a list of topics to write about

- Write several engaging titles for a blog post

SEO

- Write a list of blog topic ideas related for the keywords I give you

- Identify high intent keywords in a chosen area

- Create a list of variations of a keyword I give you

- Create a list of long tail keywords targeting a specific topic

- Create a list of long tail keywords

- Inject a list of keywords into an existing paragraph

- Inject a list of keywords into an existing paragraph

FAQs

Our AI writing shortcuts are free to use, all that is required is an API key from OpenAI, which anyone can signup and get.

At signup, OpenAI even gives you $5 worth of credits for free. That should last you several weeks worth of writing!

Check out this simple 3 step guide to help you get started.

We don't want our customers to worry about hitting their "word limit" and their usage being throttled each month.

Lyla is powered by the OpenAI's powerful GPT software which they offer to everyone. But rather than charging a premium for the words you generate, we pass along OpenAI's wholesale pricing to you!

On Lyla, you can generate over 1 million words for $5 or roughly 5 cents per 10,000 words!

Learn MoreIt's easy! Once you've signed up on Lyla, just follow these step:

- Sign up on openai.com & get your own API key

- Add your API key on Lyla's website which is 100% secure and can only be used by you

-

Your done! The words you generate will be billed through OpenAI at their wholesale API pricing

Check out our API guide for more detailed instructions .

A shortcut on Lyla is a made up of 1 or more prompts which have been designed to get the most out of GPT's writing capabilities. An AI writing shortcut on Lyla is made up of 1 or more prompts which have been designed to get the most out of GPT's writing capabilities.

Our multi-prompt AI shortcuts are especially powerful and can greatly improve ChatGPT's output while offering repeatable results.

No more cutting and pasting prompts.

All you have to do is answer a few questions and our shortcuts will do the rest.

Like programming, prompt design is a skill that takes time to develop.

But by using or shortcuts, you can take advantage of our years of experience in complex prompt engineering.

Still have questions?

- Check out our documentation for more useful information

- Send an email to [email protected]

- Send a question thru Contact Form

- Check out our Reddit Community